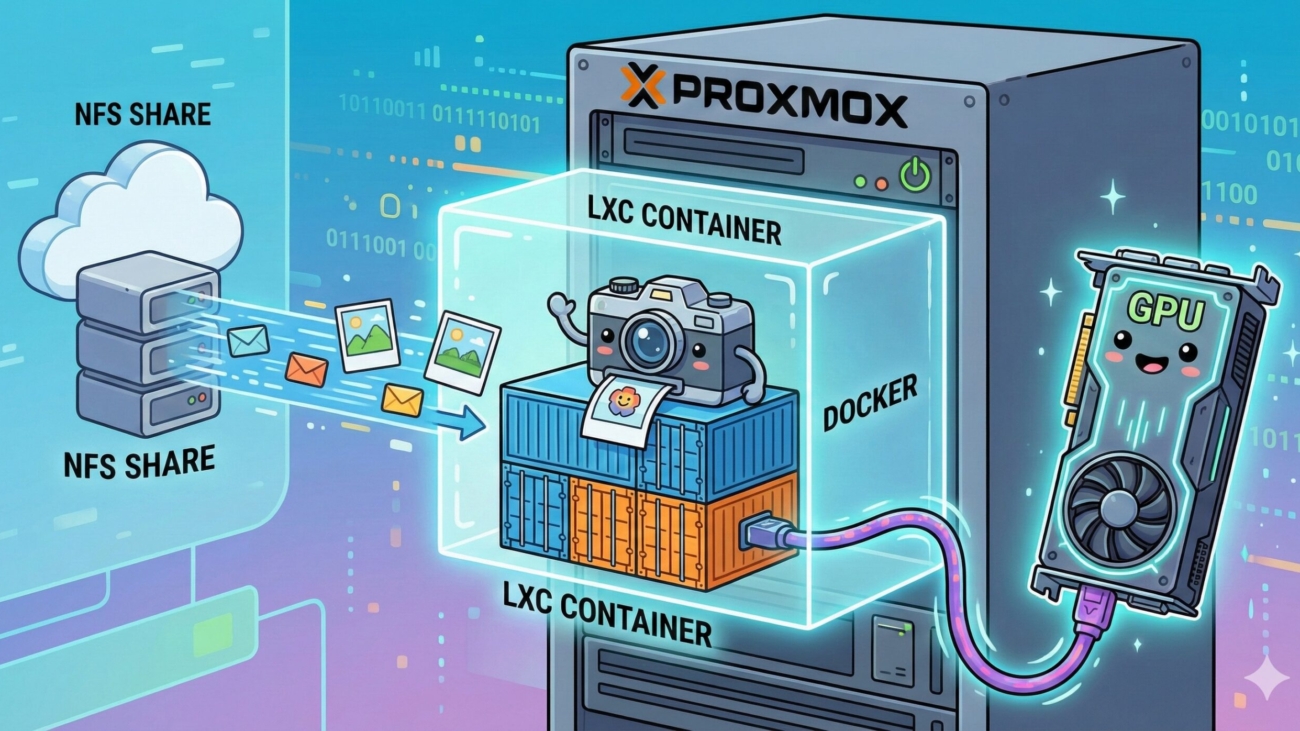

A technical walkthrough on self-hosting the Immich photo library inside a lightweight, unprivileged Proxmox LXC container. It covers securely mapping a TrueNAS NFS share to bypass unprivileged LXC permission errors, passing through an NVIDIA GPU (GTX 1080ti) to the container, and using Dockge for deployment. The result is a highly secure, hardware-accelerated photo backup system with blazing-fast video transcoding and AI facial recognition.

From Wasted Space to Unified Storage

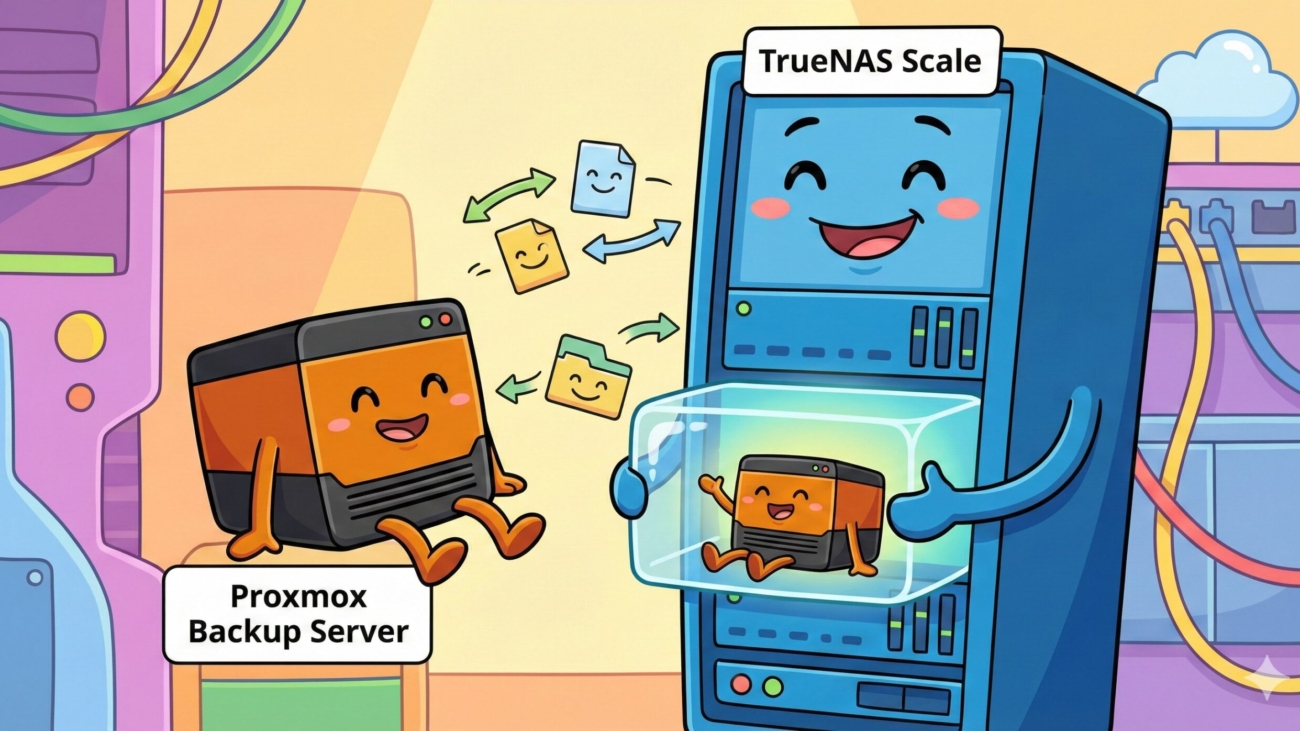

How to repurpose an underutilized bare-metal backup machine into a TrueNAS Scale NAS to create unified, shared storage. To keep backup capabilities without virtualization overhead, Proxmox Backup Server (PBS) is hosted natively on top of TrueNAS inside a lightweight Debian 12 container. The guide explains dataset mapping, bridge networking, and installation steps to create a highly efficient, low-resource backup and storage solution.

In the homelab world, it’s easy to fall into the trap of over-provisioning hardware for a single task. Until recently, I had a dedicated bare-metal machine running Proxmox Backup Server (PBS). It had 2TB of usable storage, but my actual Proxmox VE backups only totaled around 350GB.

Leaving all that unused space locked strictly to backups felt like a massive waste. At the same time, I didn’t have a dedicated NAS appliance on my network. I really needed a centralized place for shared storage—dropping family photos, storing videos, and keeping all my Batocera save files synced up.

The solution? Nuke the bare-metal PBS installation, turn the hardware into a TrueNAS Scale appliance, and run Proxmox Backup Server on top of TrueNAS inside a lightweight Linux container. Here is a detailed look at how I pulled it off.